Claude Opus 4.6: What Developers Need to Know in 2026

Discover how Claude Opus 4.6’s agent teams, smarter reasoning, and longer context will reshape dev workflows, what to expect, benefits, and migration tips.

Sohail Shaikh

Author

Claude Opus 4.6: What Developers Need to Know in 2026

There's a phrase Scott White, Anthropic's Head of Product, used when announcing Claude Opus 4.6: "vibe working."

It sounds like startup jargon, but it points at something real. A year ago, most developers used Claude to answer a question, draft a snippet, or get unstuck on a bug. It was a great assistant. Now, with Opus 4.6, the workflow is shifting — you hand it a real task, set some parameters, and walk away. It just... does the work.

That shift has implications for how we build, how we think about code review, and frankly, how we structure our engineering teams. This isn't hype. Let's walk through what actually changed, what it means for your daily work, and a few honest caveats worth knowing before you migrate.

What Is Claude Opus 4.6?

Anthropic released Claude Opus 4.6 on February 5, 2026, just a few months after Opus 4.5 launched in November 2025. It's the flagship model in the Claude family — sitting above Sonnet and Haiku in capability and cost.

The upgrade covers four main areas: multi-agent collaboration, smarter reasoning, dramatically longer context, and new API primitives that give developers much finer control. Pricing stays flat at $5 per million input tokens and $25 per million output tokens, which means you're getting more power for the same spend.

Let's break each piece down.

1. Agent Teams: Your First Real AI Workforce

The headline feature. Opus 4.6 introduces agent teams directly within Claude Code — multiple Claude agents working in parallel on segmented pieces of a larger task, then coordinating to produce a unified result.

The analogy isn't perfect, but imagine assigning a feature request and having three agents simultaneously handle the backend logic, the frontend integration, and the test suite — then handing off to a fourth agent that reviews all three outputs for consistency.

💡 Practical impact: Agent teams are currently available in research preview for API users. If your team is building complex agentic workflows — CI pipelines, long-running data tasks, multi-step code generation — this is worth exploring early.

This is the feature Michael Truell (co-founder of Cursor) and GitHub's Mario Rodriguez have both noted publicly: it finally unlocks long-horizon tasks that previously required human coordination between tools or models.

🎥 Watch: Claude for Everyday Work – Official Demo

A quick walkthrough showcasing how Claude helps with real-world professional tasks.

2. Adaptive Thinking: Smarter, Not Just Louder

Extended Thinking was a useful feature in earlier Claude models, but it had a clunky interface — you'd allocate a fixed budget_tokens value and hope the model used it wisely. Opus 4.6 replaces this entirely with Adaptive Thinking.

Here's the core idea: instead of you specifying how much to think, Claude decides. Four effort levels give you control over the ceiling, not the floor.

The practical benefit: on simpler prompts, high effort won't waste tokens if the task doesn't need deep reasoning. Claude skips the heavy thinking and returns faster.

Migration note: thinking: {type: "enabled"} and budget_tokens are deprecated. They still work on 4.6 but will be removed in a future release. Start migrating to thinking: {type: "adaptive"} now.

3. The 1M Token Context Window (Finally)

Context management has been one of the persistent frustrations with large language models. Opus 4.5's "Infinite Chats" feature addressed one side of the problem. Opus 4.6 goes further.

The model ships with a 200K default context window, with a 1M token context window available in beta. The MRCR v2 benchmark — which tests retrieval accuracy across very long documents — tells the story clearly:

| Model | MRCR v2 Score (1M context) |

|---|---|

| Claude Opus 4.6 | 76% |

| Claude Sonnet 4.5 | 18.5% |

The "context rot" problem — where model quality degrades as conversations grow longer — is effectively solved at this scale. For developers working with large codebases, long document sets, or extended agentic sessions, this is genuinely meaningful.

A few things to keep in mind:

- The 1M context window is in beta and may not yet be suitable for production at scale.

- Premium pricing applies for prompts exceeding 200K tokens.

- The standard context window remains 200K, so most use cases don't need to change anything.

4. Context Compaction and the Infinite Conversation API

Alongside the larger context window, Anthropic introduced the Compaction API — a server-side mechanism that automatically summarizes older conversation segments when you approach the token limit.

This enables effectively infinite conversations without crashes or manual context pruning. For agentic workflows where a task might run for hours and accumulate thousands of turns, this changes the architecture significantly. You no longer need to build custom summarization layers or manage rolling windows manually.

5. API Changes That Need Your Attention Now

This section matters if you're an existing API user. Two changes require action:

🚨 Breaking Change: Assistant message prefilling is disabled on Opus 4.6. Requests with prefilled assistant messages return a 400 error. If your code uses last-assistant-turn prefills to steer outputs, migrate to structured outputs or system prompt instructions before switching to the new model ID.

The second change is more subtle: Opus 4.6 may produce slightly different JSON string escaping in tool call arguments (e.g., Unicode escape handling, forward slash escaping). Standard parsers like json.loads() or JSON.parse() handle this automatically — but if you're parsing tool call input as a raw string, verify your logic still works.

New model ID: claude-opus-4-6 (no date suffix).

New API Capabilities Worth Exploring

| Feature | What It Does |

|---|---|

| Fast Mode | Up to 2.5× faster output at $30/$150 per MTok. Same intelligence, faster inference. |

| 128K Output Tokens | Doubled from 64K. Enables richer single-response outputs. |

| Data Residency Controls | Route inference to "global" or "us" per request using inference_geo. |

| Fine-grained Tool Streaming | Now GA on all models. No beta header required. |

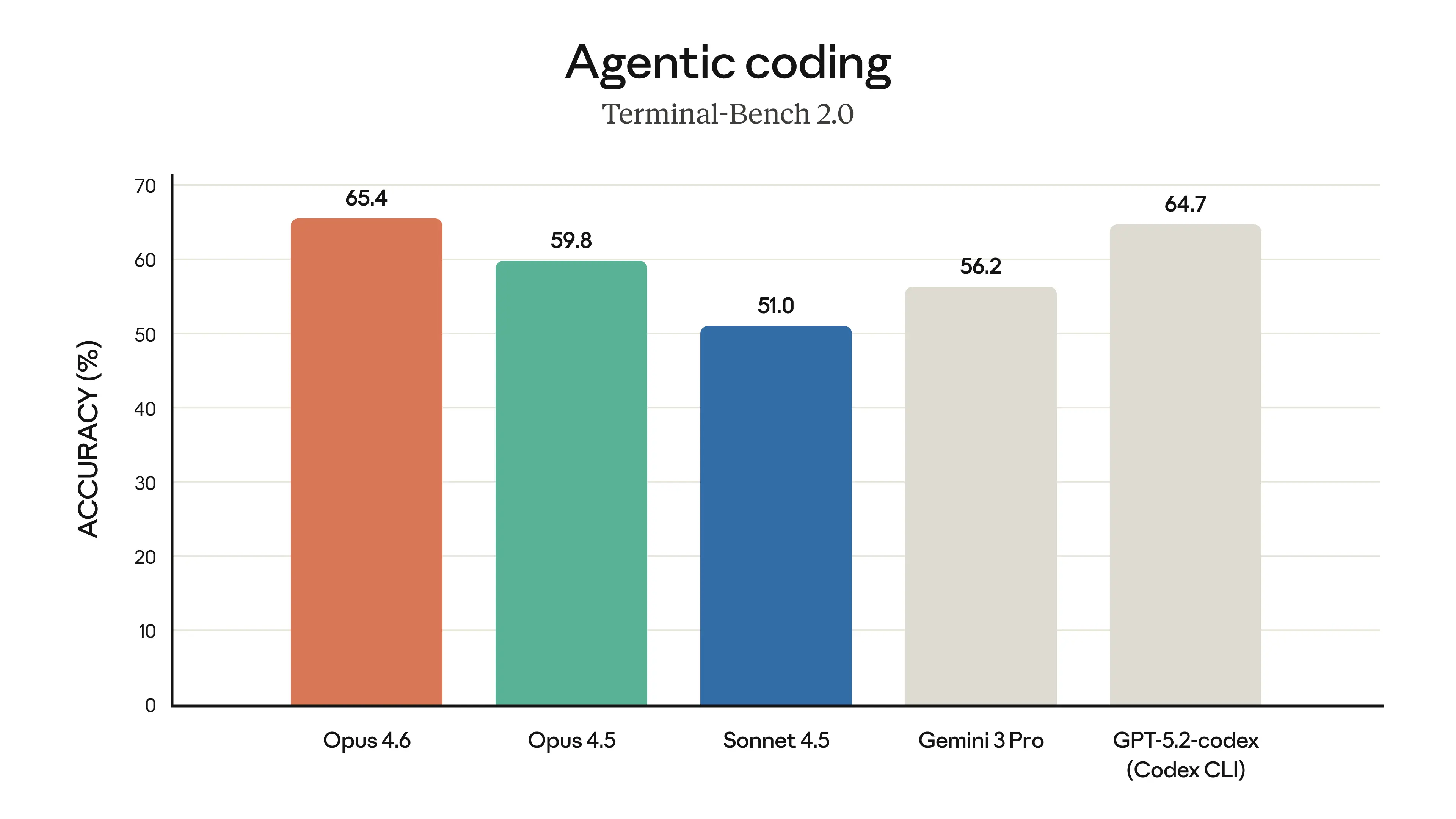

6. How It Stacks Up: Benchmark Reality Check

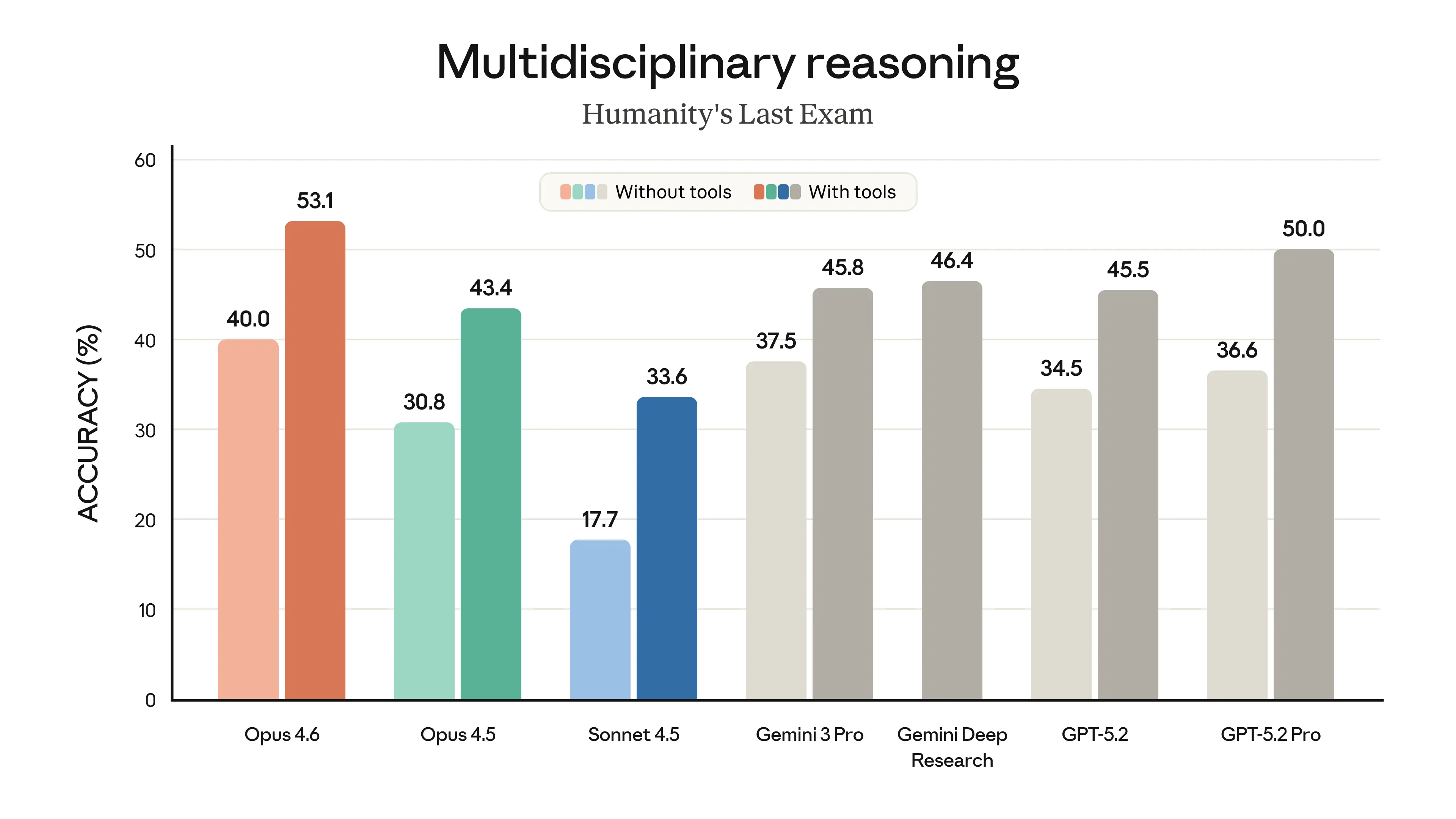

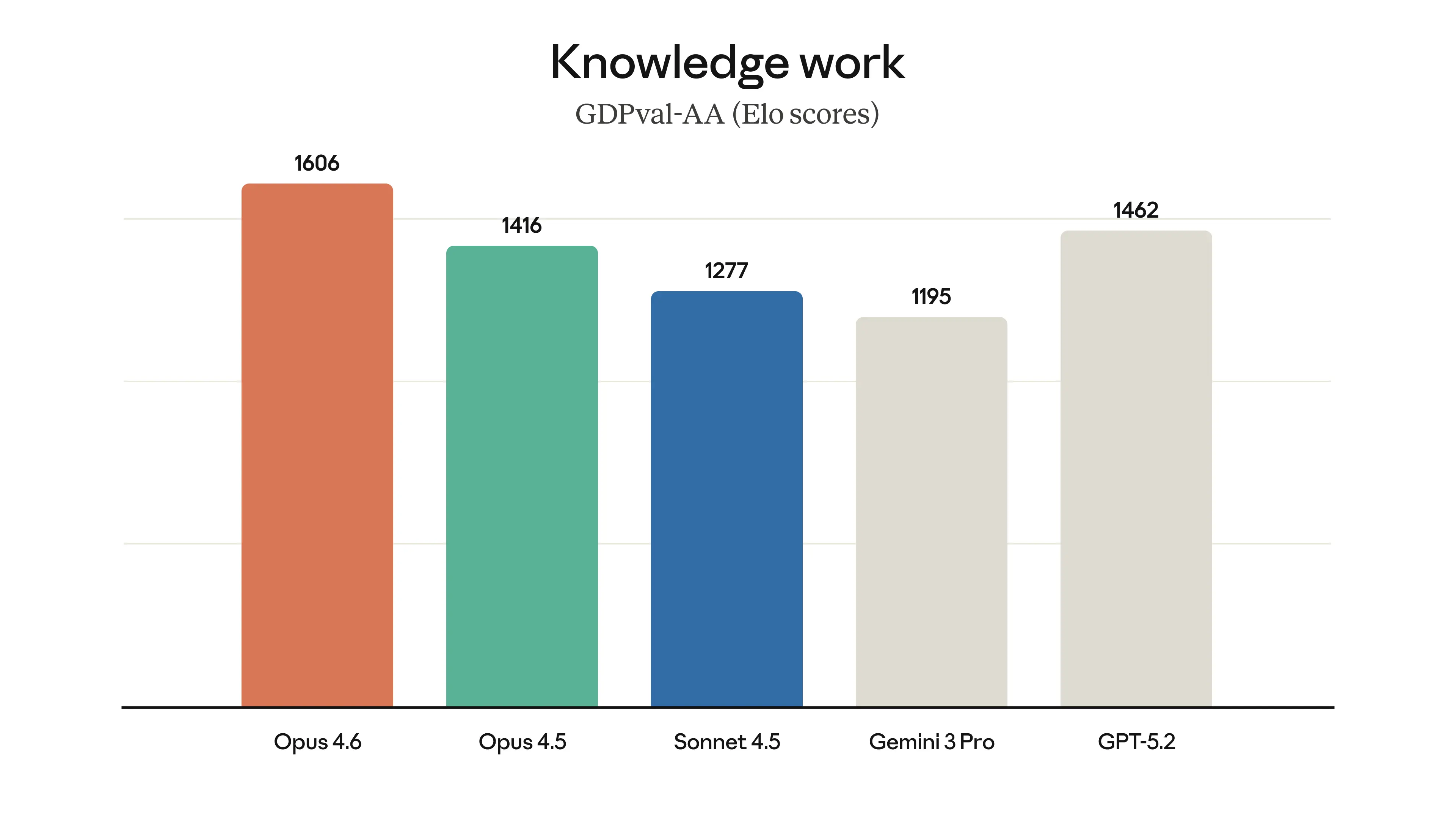

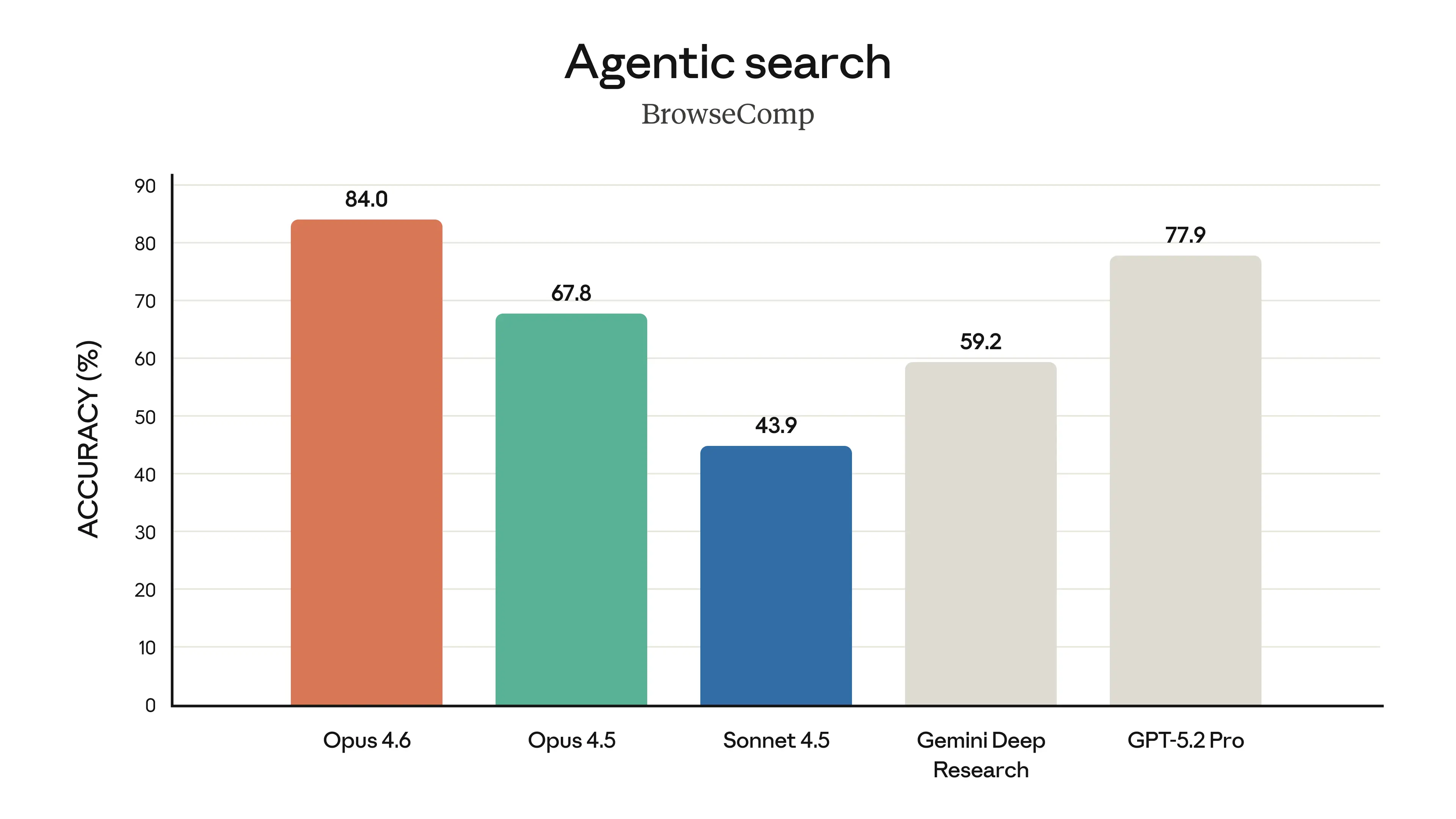

Benchmarks are imperfect measures, but they're not useless. Here's an honest look at where Opus 4.6 leads — and where competitors still hold ground.

The takeaway: Opus 4.6 leads meaningfully in coding tasks, long-context reliability, and enterprise knowledge work (legal, finance). GPT-5.2 holds a slight edge on graduate-level reasoning benchmarks. Gemini 3 Pro still has the largest native context window at 2M tokens.

No single model wins everything. Your choice should depend on your actual use case, not headline scores.

Opus 4.6 is state-of-the-art on real-world work tasks across several professional domains.

7. Integrations: Where Opus 4.6 Now Lives

Beyond the core API, Anthropic has pushed Opus 4.6 into several enterprise workflows:

- Claude in PowerPoint (Preview): Claude generates presentations natively inside PowerPoint, matching your existing colors, fonts, and layouts.

- Claude in Excel: Interprets messy, unexplained spreadsheets without you needing to describe the schema.

- Microsoft Foundry / Azure: Available for enterprise teams building on Azure infrastructure.

- Amazon AWS, Google Cloud: Standard cloud availability at launch.

- claude.ai chatbot interface: Available directly for interactive use.

The Honest Limitations

For all the progress, a few things are worth flagging before you get excited:

- Agent teams are in research preview — production use is premature for mission-critical workflows.

- The 1M context window is in beta and carries premium pricing beyond 200K tokens. Budget accordingly.

- Fast Mode costs significantly more — 6× the input token price. It's powerful, but it's not a default switch.

- Adaptive Thinking at

higheffort (the default) will still "overthink" simpler tasks. Anthropic's own recommendation is to dial effort tomediumfor lightweight use cases. - As with all AI coding assistance, Opus 4.6 doesn't eliminate the need for code review. It's a faster, smarter pair programmer — not a replacement for engineering judgment.

What This Means for Your Workflow

The practical shift with Opus 4.6 isn't just that the model is more capable. It's that the architecture of how you use AI is starting to look different.

Single-agent, single-turn workflows are becoming less interesting. The developers getting the most value are the ones designing multi-agent pipelines, using context compaction to sustain long tasks, and treating model output as a first draft that the system reviews and improves on its own.

That's a different skill set than "write a good prompt." It's closer to system design — and it's where the leverage is headed.

Quick Migration Checklist

If you're upgrading from Opus 4.5:

- Update model ID to

claude-opus-4-6 - Remove any assistant message prefills (migrate to structured outputs)

- Switch from

thinking: {type: "enabled"}tothinking: {type: "adaptive"} - Verify raw JSON string parsing still works correctly

- Evaluate effort levels — consider setting

mediumfor lightweight tasks - Review token pricing if you exceed 200K context per request

Closing Thoughts

Claude Opus 4.6 is a genuine step forward — not a minor point release dressed up in marketing language. The agent teams feature alone changes what's architecturally possible for teams building on the Claude API. The adaptive thinking overhaul makes the reasoning system more usable in practice. And the 1M context window, even in beta, points toward a model that can hold an entire codebase in its head.

The keyword from Anthropic's own announcement — "vibe working" — is a useful frame. The best developers using Opus 4.6 won't be people who prompt harder. They'll be people who design systems where Claude does more of the sustained, parallel, coordinated work that used to require humans.

That shift is already happening. Now you have the tools to be deliberate about it.

Join the Verse

Get exclusive insights on Next.js, System Design, and Modern Web Development delivered straight to your inbox.

No spam. Unsubscribe at any time.